In 2014 we spent the better part of a year trying to predict bestsellers from manuscripts. The hypothesis was straightforward: somewhere in the text of a book, in its language, structure, and topical content, there has to be signal that correlates with how the book will sell. If you could extract that signal at scale, you could build a tool that read a manuscript and gave a literary agent a defensible prior on its commercial potential. Agents receive seventy or eighty unsolicited submissions a day. Most go in the slush pile. The opportunity, in theory, was to flag the manuscript that should not be there.

We built it. The system accepted manuscripts as email attachments, ran them through a pipeline of classifiers, and emailed back an analysis within seconds. The technical work was substantial. We trained on hundreds of thousands of books. The actual sales data is not public, then or now. We worked through the dimension-reduction problems that come with extremely sparse text matrices. We tried support vector machines, naive Bayes variants, latent Dirichlet allocation for topic discovery, latent semantic analysis, stochastic SVD for finding comparable titles. We integrated readability metrics, syllable counts, sentiment indicators, gender and tense features, named entity recognition. The results were credible.

We piloted with several of the largest literary agencies. Agents sent us manuscripts. The system returned classifications, comp titles, topic summaries, writing-style metrics. There was real interest. The work ended up sitting on shelves and not in workflows.

Two reasons it did not break through, both worth examining because they apply directly to how we think about metadata work today.

The first reason is that some manuscripts that scored well were poorly written. This is not actually a refutation of the model. The history of publishing is full of poorly written commercial successes. Twilight was widely panned for prose quality and sold over 160 million copies. The model was answering "is this commercially viable" rather than "is this well-written," and those are different questions. But for an agent, the distinction was a problem. Agents make their living on pattern matching across thousands of manuscripts. When a model recommended manuscripts the agent's pattern-recognition would have rejected, the agent did not have a way to evaluate whether the model was right or simply confused. The cost of being wrong, for an agent, is opportunity cost on the time spent shopping a book to editors. They were not going to take that risk on the basis of a score.

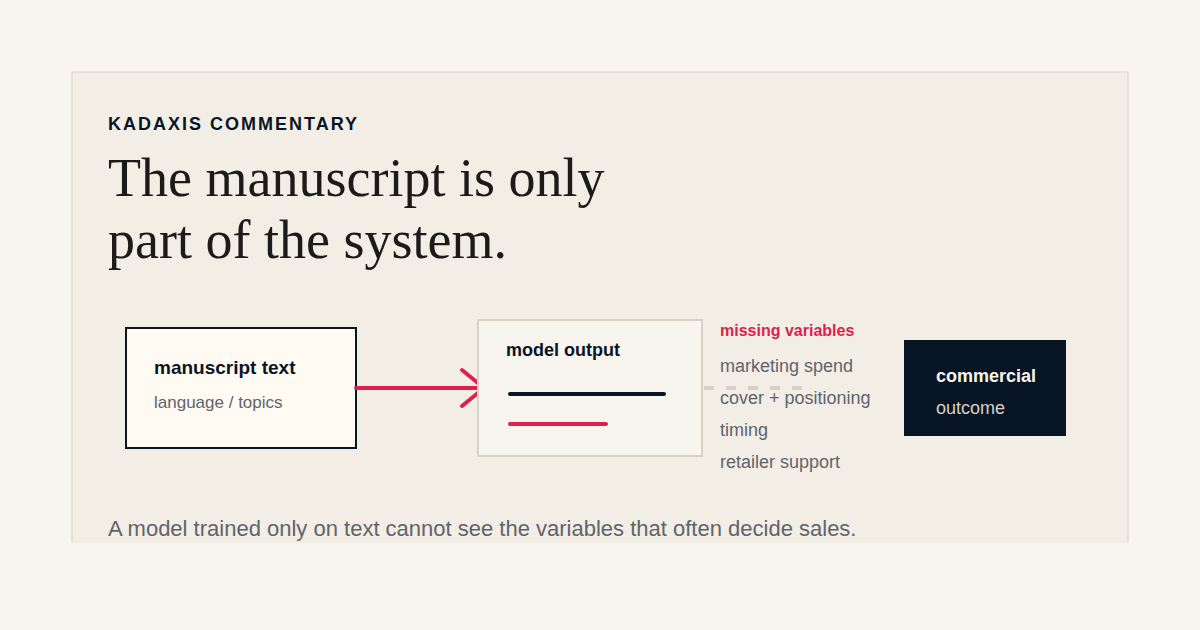

The second reason is more important and shifted how we think about all of this. The model was trying to predict an outcome (commercial success) using only one of the inputs to that outcome (the manuscript text). The other inputs (marketing investment, the publisher's existing relationships with retailers and reviewers, cover and design, timing, comp-title positioning, sometimes a single early endorsement) are not in the manuscript. They cannot be extracted from the manuscript. They are decisions made later, by people with budgets and judgment. A great manuscript with no marketing spend behind it is almost guaranteed to underperform. A merely good manuscript with significant marketing spend behind it can become a phenomenon.

This was the lesson we took away: the manuscript is necessary but not sufficient. Predictions about commercial outcome that ignore the marketing variable are predictions about a partial system. They will be right sometimes and wrong sometimes, and there is no way to know in advance which side of the line a given prediction sits on.

That lesson is what shaped the work we have done since. The keyword and metadata work is a different proposition. It operates on the variables that can be controlled, by a publisher, in service of the marketing investment that determines outcomes. We are not predicting whether a book will sell. We are giving the marketing investment a fair chance to find its audience, by closing the gap between how the book is described in metadata and how its likely readers are searching.

This is a more modest claim than "we can predict your bestsellers." It is also a claim we can stand behind, because the variables involved are inside the publisher's control rather than upstream of it. A book with strong metadata, in a category where the marketing investment is appropriate, is better positioned in retailer search than the same book with weak metadata. That is a defensible statement of cause and effect. "This manuscript will sell 50,000 copies" is not.

There are vendors in the publishing space who still claim some version of bestseller prediction from manuscript text alone. We are not one of them. The work we tried in 2014 produced real results and real limits, and the limits were instructive. The tools we have built since then are the answer to the question of what could actually be done well, not what would be impressive to claim.

The honest story is the more durable story. Anything that promises an outcome it cannot independently produce is going to fail at the moment when failure is most expensive, which is after the publisher has bought in.