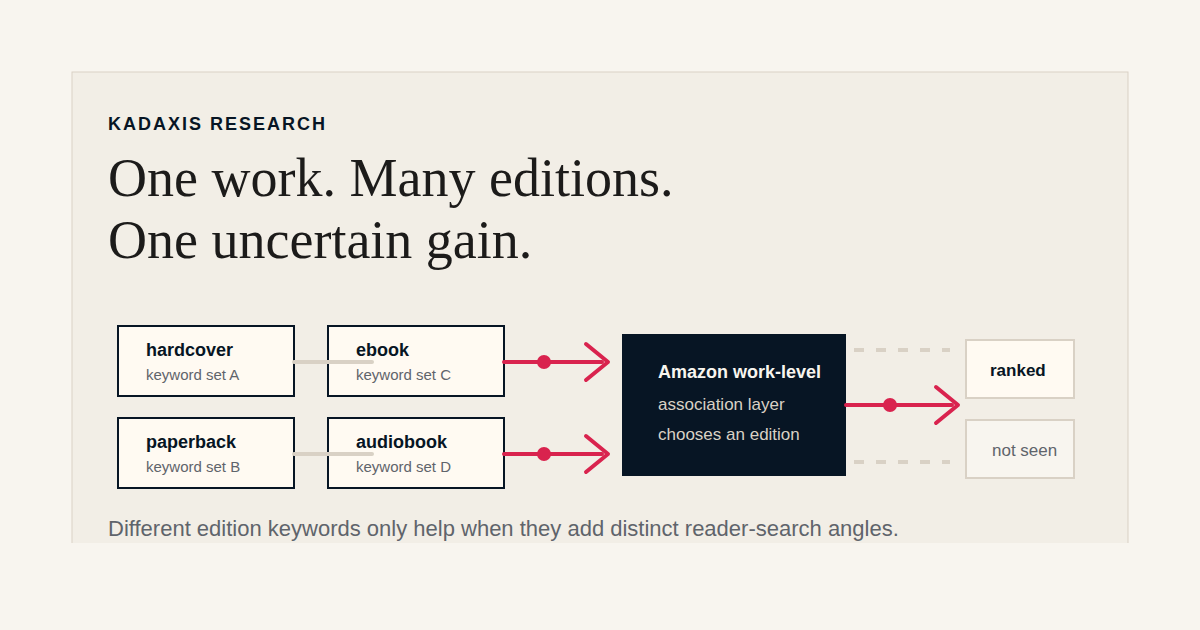

A common piece of metadata advice in publishing is to assign different keywords to the different format editions of the same title. The hardcover gets one set of keywords, the paperback gets a different set, the ebook gets a third, the audiobook a fourth. The theory is that the marketplace will treat each ISBN's keyword field as additional surface area for the same underlying work, so spreading the keywords across editions expands the total set of searches the work can show up for.

The theory is plausible. The behavior would be operationally useful if it were reliable. It also has not, to our knowledge, been tested rigorously in public. So we tested it on Amazon, which is where the conventional advice is usually applied.

The short version: the phenomenon exists in specific cases, but our evidence does not support the broader claim that differentiated per-format keyword assignment reliably expands a work's search surface. Even the cleanest cases we found had partial alternative explanations.

What we did

We looked at publisher-assigned ONIX keywords for several thousand multi-format works and identified the cases where the different ISBN editions had been assigned different keyword sets rather than identical ones. About thirty-five percent of multi-format works in our cleanest slice (English-only titles, same publisher across editions, keywords present on at least two editions) had genuinely differentiated keyword sets across editions. The majority had identical or near-identical sets across formats.

For the differentiated works, we tested whether the unique keywords on one edition's ONIX record produced search results that surfaced any edition of the work, on any Amazon search index (Books, All, Kindle, Audiobook). The proof bar was specific: a keyword unique to ISBN A had to rank the work in Amazon search, and that result had to survive a check against the work's visible metadata. We wanted to rule out simple overlap with title, description, or category copy that Amazon could already see. For the strongest cases, we also tested whether Amazon was responding to the exact unique phrase or to a broader component of it, by running each ranked phrase against its component terms and rejecting cases where the broader term also ranked.

We tested roughly eighty works in live Amazon search.

What we found

Out of seventy-four works tested under the strictest version of the protocol, no case fully cleared every test we threw at it. Two came closest, each with limits worth describing in detail.

The first was The Last Thing He Told Me by Laura Dave, a 2021 Scribner novel with hardcover and paperback editions. The hardcover ISBN had keywords including "eight hundred grapes," "800 grapes," and "lisa jewell." The paperback ISBN had keywords including "houseboat mystery" and "san francisco bay suspense." Each of these phrases, when entered into Amazon search, returned the work somewhere in the result set. None of them appeared in the work's visible title, description, contributor list, or category metadata. None of them produced a result that was explainable by a shorter component term.

The paperback-side phrases hold up under further scrutiny. "Houseboat mystery" and "san francisco bay suspense" are descriptive content phrases. They are not author names, not titles of other books, not category labels. The ONIX keyword field is the most plausible explanation for why they surface the work.

The hardcover-side phrases are weaker on closer examination. "Eight hundred grapes" is Laura Dave's prior novel. "800 grapes" is the same novel as a numeric variant. "Lisa jewell" is a contemporary author in the same category. Amazon performs author-adjacency surfacing independent of the ONIX keyword field: a query that is an author's name or the title of one of an author's other works can surface that author's other titles, regardless of whether any ONIX keyword has been assigned to make the connection. We tested this separately and saw it work cleanly in unrelated cases. That means the hardcover-side phrases in Last Thing may be ranking the paperback ASIN for reasons that have nothing to do with the publisher's ONIX work. The keyword field may be supplying signal Amazon was going to generate on its own.

The second close case was Formula One: The Champions, an Ivy Press illustrated history whose two editions had been tagged with different driver-name keywords. Both sets of names produced rankings that survived our checks, though only one edition contributed multiple surviving phrases. Mechanistically this case is cleaner than the Last Thing hardcover side, because the driver names are content-adjacent rather than author-adjacent (the book's author is Maurice Hamilton; the keywords are mid-century F1 drivers). Author-clustering does not explain why the work surfaces for "denis hulme" or "giuseppe nino farina." The asymmetry is what kept us calling it supporting rather than strong: one edition carrying two surviving phrases and the other carrying one is a weaker shape than balanced contribution from both.

Beyond those two cases, the vast majority of the seventy-four works produced no ranking for the work at all, results that collapsed under control checks, or results that were explained by visible metadata Amazon could already see.

How Amazon handles per-edition keywords

Two observations from the data are worth pulling out before turning to interpretation.

When we looked closely at which ASIN actually ranked in response to a keyword from a specific ISBN's ONIX record, the answer was not the ASIN you would expect. In the Last Thing He Told Me case, keywords assigned to the hardcover ISBN consistently surfaced the paperback ASIN, not the hardcover. The keywords were doing work; they were just doing work for a different edition than the one their ONIX record was attached to. We saw the same pattern in other cases.

Amazon also makes associations the publisher never supplied. The author-adjacency behavior described above is one example: a query that names an author can surface other books by that author even when the query phrase appears nowhere in any of those books' metadata. We have tested this directly. A query that is the title of one of an author's other works can do the same thing. This is a mechanism Amazon operates independent of any ONIX keyword field, and it produces results that look indistinguishable from "the ONIX keyword field worked" unless you specifically test for it.

Both observations point at the same broader truth. Amazon appears to be associating signals at the work and author level, then choosing which edition to surface in response to a given query based on factors other than the per-edition keyword field. Price, availability, format preferences inferred from session behavior, the work's overall popularity, and which edition the user has already viewed or owns all appear to outweigh the per-edition keyword distinction at the surfacing stage. And the keyword field itself sits alongside several autonomous association mechanisms Amazon runs on its own.

That has a practical implication: even when per-edition keyword differentiation appears to work, the edition you tag is not necessarily the edition that benefits, and the keyword field is not necessarily the mechanism doing the work.

What this means

The behavior the per-edition tactic is supposed to produce, where each edition independently widens the work's reach through ONIX keyword indexing, did not occur cleanly even once in seventy-four carefully selected attempts. The two closest cases each had alternative explanations or asymmetric evidence. Even granting the limits of any single research project (depth of result-set checking, time-of-day variance in Amazon search, the inability to see Amazon's internal indexing decisions), this is not a base rate that supports "spread your keywords across editions" as default operational advice.

The more honest reading is that per-edition keyword differentiation can work, but only when the keywords on each edition are genuinely different and genuinely relevant and genuinely targeted at reader searches that the rest of the metadata does not already explain and that Amazon will not generate on its own. That last condition is the one publishers most often miss. Keywords that are author names, author-other-work titles, or close category labels may rank the work, but they may be ranking it for reasons that have nothing to do with the ONIX field. The slot on the keyword field has been spent; the signal it added is uncertain.

The more common failure mode in our data was differentiated keyword sets that were technically different but not meaningfully different from a reader-query perspective: paraphrases of the same idea, singular-and-plural variants of the same phrase, or two phrases that both pointed at the same broad category. That kind of differentiation is what produces the broader negative result.

For a metadata team that has been told to differentiate keywords across editions: the base rate of clean wins is low enough that the time is better spent elsewhere. For a team that wants to do it as a deliberate per-title investment, the bar to clear is harder than the conventional advice suggests. Each edition's keywords have to target a genuinely different reader-search angle that is not explained by the visible metadata and not something Amazon will generate on its own through author-clustering or category-adjacency.

Limits

A few things this research does not establish.

It does not establish that the per-edition tactic cannot work. We found cases that come close. A publisher with deep insight into a specific title, who can identify two genuinely different and genuinely relevant reader queries that map cleanly to two different editions, and that are not phrases Amazon will surface on its own, can plausibly produce a clean case. Our evidence is against reliability and prevalence, not against possibility.

It does not isolate per-edition strategy from underlying keyword quality. As we have shown separately, only about eight percent of publisher-assigned ONIX keywords produce a visible Amazon search result for the assigned book in the first place. The other ninety-two percent fail to surface the book at all, in most cases because the keyword choices themselves duplicate what visible metadata already supplies, target queries too broad to compete in, or do not match the language readers actually use when they search. That is a problem of keyword craft, not edition strategy. If the differentiated keyword sets in our sample drew disproportionately from that broader pool of weaker keyword work, which is a realistic assumption given how widespread the underlying problem is, the low cross-edition success rate partly reflects keyword-craft failures across publisher metadata generally, rather than a specific failure of per-edition spreading. We cannot cleanly separate the two effects in the data we have. A publisher who is already doing strong primary keyword work, and is layering per-edition differentiation on top of that foundation, may see better rates than our base data suggests.

It does not establish anything about how Amazon's search system works internally. We observed inputs and outputs. The internal architecture of how Amazon chooses to associate ONIX signals to works and editions is not visible to us and changes over time. Other retailers may behave differently.

The takeaway

The conventional advice to assign different keywords to different format editions of the same work is not wrong in principle. The phenomenon it describes does exist in some form; we have named specific cases where keyword fields appear to be doing work at the cross-edition level.

It is also rare enough, unreliable enough, and confounded enough by Amazon's own autonomous association behavior that the return on effort does not justify it as a default operational practice. Even the cleanest cases we found had partial alternative explanations or asymmetric evidence. Publishers who spread keywords across editions are often not getting what they think they are paying for: Amazon may be doing the association anyway, or the keyword may be ranking an edition other than the one it was assigned to.

The practical version of the advice we give clients is narrower than the conventional one. Do one strong keyword set first, before thinking about edition-level differentiation. If you have headroom after that, consider per-edition work title by title, only on books where you have specific insight into a genuinely different reader-search angle for one edition versus another. As a backlist-wide templated practice, the return is not there.