Most companies that try to generate book keywords start with the most obvious source: the text of the book itself. We did too. The first version of the system we built, around 2014, was a pipeline that ran novels through extraction models. Stanford NLP for entities, term frequency analysis, lingpipe for significant phrases, the standard stack at the time. The output was a long list of words and phrases that showed up often in the text or seemed structurally important. We ran it across thousands of books and looked at what came out.

For some nonfiction categories, the results looked reasonable. A cookbook may contain the words "garlic," "braise," and "Mediterranean." A technical book may contain the terms its audience already knows. In those cases, the text can provide useful raw material. But even there, the text rarely captures the whole search surface. A reader may search for "easy weeknight dinners," "anti inflammatory meal plan," "how to pass PMP exam," or "leadership book for new managers" even when those exact phrases are not central in the manuscript.

Fiction made the failure easiest to see.

Here is the problem in one sentence: a dystopian novel like The Hunger Games does not have characters talking, explicitly, about the dystopian world they live in. They live in it. They do not narrate it. Suzanne Collins did not need her teenage protagonist to think the word "dystopia" because the reader was supplying that frame for her. The book is about dystopia. The book's text is mostly not.

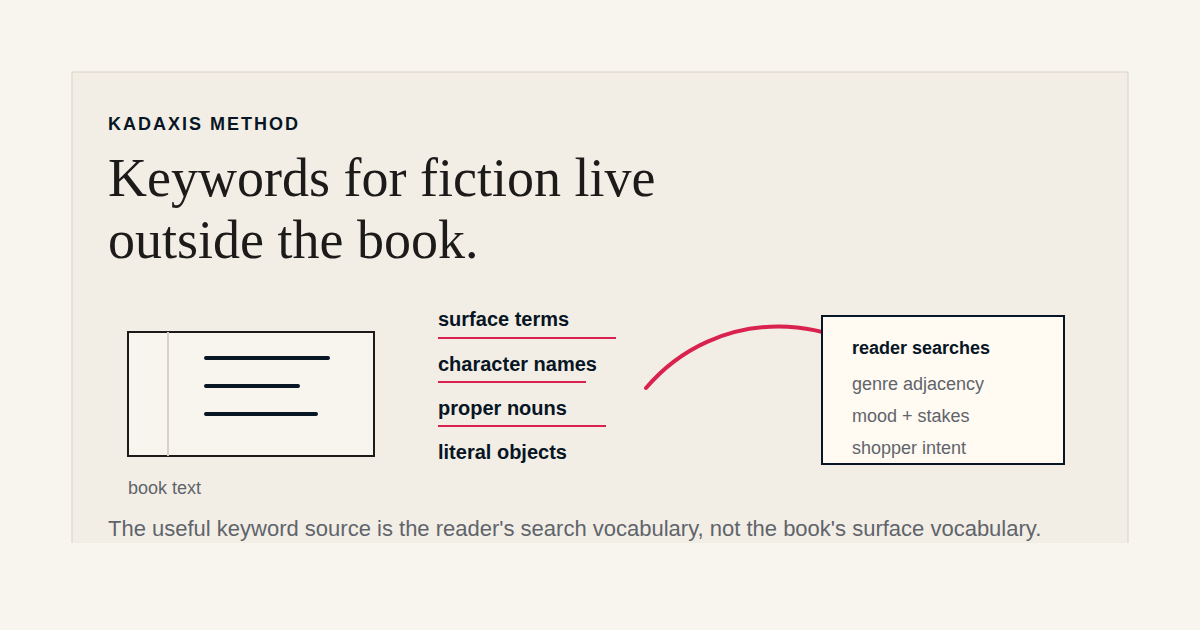

Run The Hunger Games through a naive keyword extractor and you get a long list of nouns and proper nouns: Katniss, Peeta, Capitol, Panem, mockingjay, arena, tracker jackers. Useful for context. Useless for keywords, because no reader who would buy that book is searching Amazon for "tracker jackers." They are searching for "dystopian young adult" or "survival romance" or "girl protagonist fights the government" or any of a dozen genre-adjacent phrases that reflect the experience of reading the book rather than its surface vocabulary.

This is the gap. The book describes itself in one register. Readers describe what they want in another. Naive keyword extraction treats the first register as the source of truth and produces output that is internally consistent with the book and almost entirely useless for retailer search.

The trap is that the failure is not visible. Run a keyword tool over a fiction list and the output looks plausible. The words are real, the phrases are real, the model is doing its job. A publisher who does not have a way to test the output against actual reader searches has no easy way to know that a tool with a long credible-looking word list is missing the queries that drive the book's category. You only see the failure when the keywords are deployed and search performance does not move.

The fix is to invert the problem.

Instead of starting with a book and asking what words it contains, start with the universe of searches readers are actually running and ask which subset of those searches is relevant to a given book. The unit of analysis stops being a book's text and becomes a body of real reader queries, weighted by category and search volume. The book is matched to that universe rather than mined for it.

This is harder to build. It requires a working corpus of reader queries, which has to be assembled rather than extracted. It requires an understanding of how retailer search algorithms weight different signals. It requires a method for mapping a specific book's category position onto the relevant query cluster. None of those are off-the-shelf inputs in 2014, when we were doing this work, or in 2026.

But the work is the work. Publisher metadata teams are not optimizing books in a vacuum. They are optimizing for a specific surface (Amazon, Apple, Audible, Kobo) where readers run real queries that the retailer's search engine resolves into ranked results. Any keyword approach that does not start from the reader's side of that interaction is solving a different problem.

The corollary is uncomfortable for any tool whose entire methodology is built on text extraction: most fiction keyword tools on the market today are built on text extraction. They produce credible-looking output that is wrong in a way that is hard to diagnose. The output reads as if it should work. It just does not move the search performance on the books it is applied to.

For a publisher evaluating keyword work, the diagnostic question to ask any vendor is: what is the source of the keywords you generate? If the answer is only "the book," the answer is incomplete. For fiction, it is usually badly incomplete. For nonfiction, it may produce useful terms, but it still needs to be checked against how readers describe the problem they are trying to solve, the outcome they want, and the emotional or practical reason they are searching in the first place.

Keywords do not only live inside the book. They live inside the searches readers run when they are looking for the experience, answer, problem, or outcome that the book provides. That distinction is the work.