Comp titles are everywhere in publishing. Editors use them to pitch acquisitions. Sales reps use them to position frontlist with retailer buyers. Marketers use them to brief copywriters and design teams. Algorithms on retailer sites use them to recommend "readers who liked X also liked Y." For a function this central to how books are described, sold, and discovered, the methodology behind generating good comp titles is surprisingly inconsistent.

Most automated comp-title tools on the market are built around one of two approaches. The first compares books on the basis of their text: extract features from the book itself (topics, vocabulary, style, named entities, sentiment), build a similarity model, find books whose features cluster nearby. The second compares books on the basis of their metadata: BISAC codes, genre tags, age range, prose length, descriptive copy. Both approaches are technically respectable. Both produce output that looks reasonable. Neither produces consistently good comps.

We learned this the same way most people in the field learn it: by building both and watching them fail in instructive ways.

The text-based approach was the more technically interesting and the more thoroughly broken. We ran it through stochastic singular value decomposition, the same technique that powers a lot of recommendation systems. We reduced full-length novels down to around 150 dimensions and ran nearest-neighbor searches in that reduced space. The model worked. Books that came back as similar were genuinely textually similar. They used overlapping vocabulary, had structurally comparable prose, addressed adjacent topics. By any technical measure of similarity, the approach was successful.

The problem was that a reader would not have called those books comparable.

A reader, asked to name books like The Seven Husbands of Evelyn Hugo, will reach for things like Daisy Jones and The Six, Malibu Rising, or maybe Lessons in Chemistry: books that share a very specific thing the model could not see, which is the experience of a strong-willed mid-century woman whose life is told to a younger woman by way of interview, with secrets, glamour, and emotional payoff. Two of those titles share an author. None of them necessarily share the closest textual similarity to Evelyn Hugo under SSVD analysis, because their textual surfaces are doing different things. What they share is a reading experience.

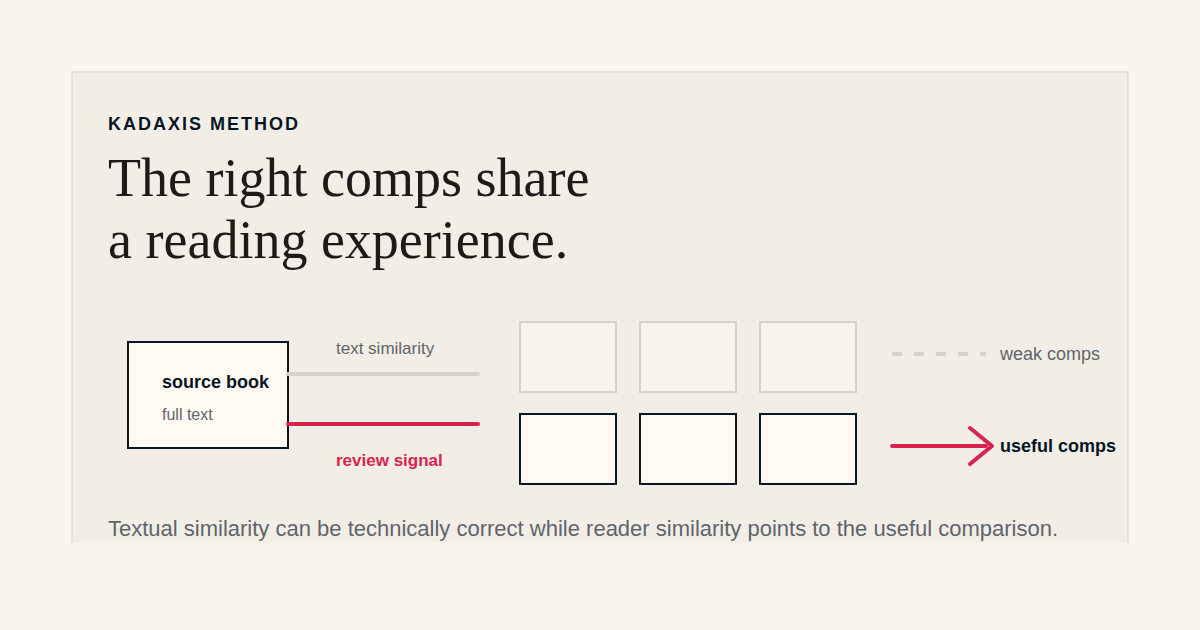

This is the shape of the problem. The text of a book is one specification of that book. The reading experience produced by that text is a different specification. Most of the meaningful comp-title comparisons readers and the trade actually make are operating on the second specification. Models that operate on the first will be technically correct and practically wrong.

The fix is to find a corpus that captures the reading experience rather than the text, and to compute similarity over that corpus instead.

Reader reviews are that corpus. When a few hundred or few thousand readers describe what Evelyn Hugo was like, the words they reliably use cluster around things like "old Hollywood," "secret love," "interview frame story," "queer historical," "twist ending." The words and phrases they use to describe Daisy Jones cluster around overlapping themes (band biography frame story, glamour, doomed love, interview format, secrets). The two clusters share a meaningful overlap. The two books' raw texts may not overlap nearly as much.

A similarity model run on reader-review topic distributions, rather than on book text, produces comp titles that match what a reader would call comparable. We ran this side-by-side with the text-based model on the same books and the difference was not subtle. The review-based model produced comparisons that read as obvious in retrospect. The text-based model produced comparisons that were technically defensible but useful to almost no one.

The implication for publishers is concrete: comp titles supplied by tools that operate on book text alone should be treated as suspect, especially in fiction categories where the reading-experience signal is the operative one. The pitch deck, sales conference, and retailer-buyer conversations are all going to use comp titles whether the publisher supplies them or not. Comps generated from the wrong source produce conversations that go in the wrong direction. A book mis-comped at acquisition gets sold to the wrong audience. A book mis-comped on the retailer surface gets recommended to the wrong reader. Neither failure mode is loud. Both compound across a list.

This is one of the cleaner technical wins available in publishing right now: comp titles produced from a corpus that actually represents how readers describe books, rather than from the text of the books themselves. The methodology is not novel and the math is straightforward. The hard part is having the corpus, the filtering pipeline, and the discipline to use the right input. The payoff is comp titles that feel correct to the people whose pattern-matching matters most: readers, booksellers, and the trade itself.